How hard can generating 1024-bit primes really be?

Prime numbers are fascinating!

On the one hand they are easy to explain, they are just numbers that have no factors other than one and themselves, but on the other hand they contain endless complexity. They show up in numerous places, ranging from mathematical concepts and conjectures to interesting looking visualizations and cryptography, underpinning many internet standards and security protocols we use everyday.

Despite my fascination with primes I never really explored them in detail. So, I thought I would challenge myself, and what better way to explore primes than to use my interest in coding to generate prime numbers!

The Challenge

But what kind of prime should I generate? Finding the one billionth prime is too easy, and getting on the leaderboard of the largest known primes is way beyond what I think I can achieve in my first attempt. Combining primes with my interest in cryptography I came up with this -

Generate primes that are capable of generating keys for the RSA Algorithm

As of the writing of this article a good length for RSA keys is 2048 bits. RSA keys are generated by the multiplication of two primes, so to get a 2048-bit key we need two roughly 1024-bit sized primes. That narrows down the challenge to generate 1024-bit primes and now you know why that’s the size in the title.

In addition to the challenge, I also set up some rules for myself:

- The code has to be written from scratch - otherwise you could just

openssl prime -generate -bits 1024and be done! “from scratch” here just means no external dependencies. - No fancy external hardware or cloud - so you can’t just throw additional computing power at the problem. I will use my laptop with an AMD Ryzen 7 CPU and 16gb RAM.

- Generate the primes in “reasonable” time - deliberately left vague so that I optimize a little but not get caught in a over-optimization spiral.

For the language I picked Rust, mainly because I happened to be learning it recently and this challenge looked like it would be good practice. I feel Rust is low level enough to play around with deeper concepts while being high level enough that the code snippets are relatively easy to understand. I won’t be using any of the more complex features of rust because I am not familiar with them yet.

With all that out of the way, let’s get started!

16 bits, the easy bit!

My plan is to slowly build up to 1024 bits, so I started at 16 bits as a bit of a warm up. In theory, the process to generate any N-bit primes is easy -

while true {

let number = <<random N-bit integer>>

if is_prime(number) {

break

}

}

Just keep generating new random N-bit numbers until one that passes the primality test is found. Even before I can tackle primality tests though I have my first hurdle, where do I get my random numbers from? Rust has an excellent crate (aka library/package) called rand that can almost be considered part of the standard library. But before I break my “no dependencies” rule right at the start I thought I should at least try to do it myself.

I remember hearing about /dev/urandom from somewhere, and upon further research it turns out this would fit my use case perfectly. The Linux kernel has a built-in Cryptographically Secure Pseudo Random Number Generator (CSPRNG) which can be accessed by reading from the pseudo device file /dev/urandom. It collects entropy from the user’s environment and uses it to periodically seed a deterministic stream cipher called ChaCha20 (fun name!), which can then generate some “true” random bits. I was hesitant to use this at first but a certain article convinced me otherwise.

This is the implementation I came up with -

// rng.rs

use std::fs::File;

use std::io::Read;

fn insert_random_bytes(mut bytes: &mut[u8]) -> std::io::Result<()> {

File::open("/dev/urandom")?.read_exact(&mut bytes)?;

Ok(())

}

fn u16() -> u16 {

let mut bytes = [0u8; 2];

insert_random_bytes(&mut bytes).expect("Cannot access /dev/urandom");

u16::from_le_bytes(bytes)

}

Note: expressions without a semicolon placed at the end of rust functions act as the function’s return value.

insert_random_bytes() takes in a mutable array of bytes as input and fills it with the output from /dev/urandom. The u16() function creates a buffer of 2 bytes (16 bits), fills the buffer with random bits and then creates a u16 integer from those bits, with u16 in rust representing an unsigned 16-bit integer. u16() is then used like this -

fn run() {

println!("random no - {}", rng::u16() | 0b1000000000000001);

}

The random number returned is OR-ed with 0b1000000000000001 to set its first and last bit to 1. The last bit set to 1 makes it an odd number and the first bit set to 1 ensures that it is a sufficiently large number which covers the entire range of bits I need.

Here’s it generating a few 16-bit random numbers -

random no - 36111

random no - 52205

random no - 45689

random no - 33631

Now that I have my very own random number generator let’s quickly finish out 16-bit primes. First, a fancy enum to store our results -

enum PrimeResult {

Prime,

Composite,

}

Then, a basic primality test called trial division to check if a number is prime. It loops from 3 to sqrt(num) and checks if any of them is a factor of num -

fn trial_division_simple(n: u16) -> PrimeResult {

let root_n = (n as f64).sqrt() as u16;

for x in 3..root_n {

if n % x == 0 {

return PrimeResult::Composite;

}

}

PrimeResult::Prime

}

And a basic loop to finish it off -

fn run() {

loop {

let num = rng::u16() | 0b1000000000000001;

if trial_division_simple(num) == PrimeResult::Prime {

println!("Prime found: {num}");

break;

}

}

}

➜ time cargo run --release

Prime found: 44809

cargo run --release 0.03s user 0.01s system 99% cpu 0.038 total

This works nicely and on average takes ~40ms to generate 16-bit primes. To confirm that my primes are actually prime, I am using a cool online tester written in WebAssembly (hosted here) along with this OpenSSL command - openssl prime <number>.

With this, we have refreshed some basic concepts and warm up is done. Now it’s time to move on to the next step!

64 bits, 4 times the bits!

After 16 bits I jumped straight to 64-bit numbers. 64-bit architecture is common nowadays on most modern hardware and with 64 bits we are well into the 20 digit numbers range (for context, 1 trillion is 13 digits). Would the simple trial division algorithm be able to handle such large numbers?

➜ time cargo run --release

Prime found: 14288847644715868907

cargo run --release 30.27s user 0.02s system 99% cpu 30.294 total

It does, kinda. 30 seconds to generate a 64-bit prime does not look great but this is not trial division’s full potential. In this section, I will try to push it to its limits.

First, here’s a more optimized version of trial division -

fn trial_division(n: u64, start: u64) -> PrimeResult {

// assumption: n is odd, n > 3 and start > 3

if n % 3 == 0 {

return PrimeResult::Composite;

}

let root_n = (n as f64).sqrt() as u64;

for x in (start..(root_n + 1)).step_by(6) {

if n % x == 0 || n % (x + 2) == 0 {

return PrimeResult::Composite;

}

}

PrimeResult::Prime

}

Changes:

- It also accepts a

startparameter for where to start the loop from. - The loop steps forward by 6 instead of 1.

- Check

n % (x + 2) == 0in addition ton % x == 0.

Basically, it only considers factors between start and sqrt(n) that are of the form 6k+1, to quote Wikipedia -

This is because all integers can be expressed as (6k+i), where i = −1, 0, 1, 2, 3, or 4. Note that 2 divides (6k+0), (6k+2), and (6k+4) and 3 divides (6k+3). So, a more efficient method is to test whether n is divisible by 2 or 3, then to check through all numbers of the form 6k±1 ≤ √n. This is 3 times faster than testing all numbers up to √n.

Next, a function to generate a list of small primes using this improved trial division -

fn generate_small_primes<const N: usize>() -> [u64; N] {

let mut primes: [u64; N] = [0; N];

primes[0] = 2;

primes[1] = 3;

let mut n: u64 = 3;

let mut nth: u64 = 2;

let mut i: usize = 2;

let limit = N as u64;

loop {

n += 2;

if trial_division(n, 5) == PrimeResult::Prime {

primes[i] = n;

i += 1;

nth += 1;

if nth == limit {

return primes

}

}

}

}

Add another possible state to our result -

enum PrimeResult {

Prime,

Composite,

Unknown, // <----- new

}

And then use the list of small primes to do a pre-check for easily divisible numbers before reverting to trial division -

fn primes_64bit() {

const N: usize = 10000;

let start = (N + 1) as u64;

let primes = utils::generate_small_primes::<N>();

loop {

let num = rng::u64() | 0x8000000000000001u64;

let mut result = PrimeResult::Unknown;

for i in 0..N {

if num % primes[i] == 0 {

result = PrimeResult::Composite;

break;

}

}

if result == PrimeResult::Unknown {

result = algos::trial_division(num, start)

}

if result == PrimeResult::Prime {

println!("Prime found: {num}");

break;

}

}

}

After all that work, here’s the result -

➜ time cargo run --release

Prime found: 12589778476493955313

cargo run --release 6.40s user 0.01s system 99% cpu 6.414 total

That’s a nice improvement from the 30 seconds it took previously. There’s still some optimization potential left on the table, but if it takes 6 seconds for just 64-bit numbers then it’s clear that this cannot scale to 1024-bit numbers.

With this, we have to leave behind the safe cozy lands of deterministic algorithms and enter the realm of uncertainty with probabilistic algorithms!

128 bits, with a bit of a twist!

This is where things start to get interesting. At first, I found the concept of probabilistic primality tests strange and tried to look for deterministic algorithms that could handle huge numbers. I did find two - APR-CL and ECPP. Both of these are so mathematically complex that I could not make sense of their research papers at all, and there isn’t much accessible information about them on the internet for someone like me who is bad at math.

After taking a look at discussions online, OpenSSL’s source code and recommendations by NIST, I realized that almost everyone including RSA uses probabilistic algorithms. The catch is that if implemented properly, these algorithms have an extremely low error rate which is negligible. From this point on all algorithms that show up will not “prove” that a number is prime, but will say that it is a “probable prime” with a certain accuracy. The first of these algorithms I explored was Fermat’s Little Theorem.

Fermat’s Little Theorem

This theorem by Fermat states: If is prime and is any integer not divisible by , then the number is divisible by . The same thing can be expressed in modular arithmetic as:

We can pick different values for where , so by definition would not be divisible by , and in theory plugging those values into this relation would tell us whether is prime or not.

All we have to do is implement this relation in code, beginning with and :

fn run() {

let num = rng::u128() | 0x80000000000000000000000000000001u128;

let base = 2u128;

println!("{}", base.pow(num - 1);

}

➜ cargo run

...

error[E0308]: mismatched types

--> src/lib.rs:71:29

|

71 | println!("{}", base.pow(num - 1);

| --- ^^^^^^^ expected `u32`, found `u128`

| |

| arguments to this function are incorrect

It took me a minute to realize that this was not an error on my part. The pow() function intentionally takes in a u32, as raising u128 to any higher power would already overflow the u128! Fortunately, our relation above is in modular arithmetic which means we can take the modulus at each step instead of at the end keeping the result less than u128.

basically,

and so -

The algorithm to implement this is called modular exponentiation. I implemented it by directly following the pseudocode from Wikipedia, using the version that implements “exponentiation by squaring” for a more efficient algorithm.

Here goes attempt #2 -

fn run() {

let num = rng::u128() | 0x80000000000000000000000000000001u128;

let base = rng::u128_range(2, num - 1);

println!("{}", mod_exp(base, num - 1, num));

}

➜ cargo run

...

thread 'main' panicked at 'attempt to multiply with overflow', src/utils.rs:38:16

note: run with `RUST_BACKTRACE=1` environment variable to display a backtrace

Oh well.

Another thing I didn’t realize is that even the multiplication of two u128 can easily become too large for a u128 to store. Defeated for now, I decided to move ahead by storing only 64-bit numbers inside the u128s. Roughly speaking the most amount of space needed for multiplication of two N-bit numbers is 2N, hence the decision to store 64-bit numbers inside u128. This idea of allocating twice the amount of bits needed will show up later too. Interestingly, the previous 64-bit step with trial division had no multiplications which is why it did not run into this issue.

Adding another possible state to the enum -

enum PrimeResult {

Prime,

Composite,

Unknown,

ProbablePrime, // <----- new

}

And here’s the Fermat test implementation. It just runs the equation k times with random bases -

fn fermat_test(num: u128, k: usize) -> PrimeResult {

for _ in 0..k {

let base = rng::u128_range(2, num - 1);

if mod_exp(base, num - 1, num) != 1 {

return PrimeResult::Composite;

}

}

PrimeResult::ProbablePrime;

}

An interesting implementation detail here, the function rng::u128_range() implies that it uniformly selects a random u128 from between 2 and num - 1 but I found it more practical to directly return a random number that’s a few bytes shorter than num. This greatly simplifies the logic while still giving us a mostly random sufficiently large number between 2 and num - 1. Moving forward this trick will be used whenever random values from a range are needed.

Here’s the full test! -

fn primes_128bit() -> u128 {

loop {

let num = (rng::u64() | 0x8000000000000001u64) as u128;

if fermat_test(num, 10) == PrimeResult::ProbablePrime {

return num;

}

}

}

➜ time cargo run --release

Prime found: 9944209443870115157

cargo run --release 0.03s user 0.01s system 99% cpu 0.033 total

That’s quite a bit faster than the ~6sec runs we were getting previously for 64 bits, but the fact that it uses a “probable prime” result might already tell you there’s a catch. The flaw in Fermat’s Little Theorem is - “pseudoprimes”. The relation defined by Fermat’s Little Theorem is true for all primes but is also additionally true for some composites. If the RNG generates one of these special composites, the code would say it is prime even when its not. These composites, also called “Fermat Pseudoprimes”, are rare but still numerous enough that we cannot rely on the accuracy of Fermat’s test.

Miller-Rabin Primality Test

The Miller-Rabin test is an improved probabilistic primality test that works on the same principles as Fermat’s test, but is much stronger and more practical to use due to a few key differences. For one, in this test no composite number is a strong pseudoprime for all bases at the same time in contrast to Fermat’s where such composites exist (called the Carmichael numbers). Miller-Rabin also has a substantially better error rate that can be called “insignificant” in most cases. In fact when looking around for what other people use, like OpenSSL’s source code and recommendations by NIST mentioned at the start, many sources recommend or are already using Miller-Rabin!

The math behind Miller-Rabin is not that important for implementing the algorithm itself, but nevertheless I will try to summarize what I understood. Feel free to skip it and jump directly to the code.

The relation we looked at in Fermat’s test was:

A more general form of can be written as , where:

- = An odd number left after factoring out all powers of 2 from .

- = The power of 2 as the factor of .

which implies:

Combining (1) and (2), we can say is a strong probable prime if one of these conditions is true:

- .

- for some .

Note: is equivalent to . It is left in the expanded form as I am working with unsigned ints and don’t have a way to represent -1.

Essentially, instead of doing a single test on it is doing multiple tests — starting with which is , then , , and so on until it reaches at .

Here’s my implementation, derived by combining the above math with the basic pseudocode described on Wikipedia:

fn miller_rabin_test(n: u128, k: usize) -> PrimeResult {

let mut s = 0;

let mut d = n - 1;

while d % 2 == 0 {

d = d / 2;

s += 1;

}

'main_loop: for _ in 0..k {

let base = rng::u128_range(2, n - 2);

let mut x = utils::mod_exp(base, d, n);

if x == 1 || x == n - 1 { continue 'main_loop; }

for _ in 0..(s - 1) {

x = utils::mod_exp(x, 2, n);

if x == n - 1 { continue 'main_loop; }

}

return PrimeResult::Composite;

}

PrimeResult::ProbablePrime

}

The first while loop factors out powers of 2, converting to . Then “main_loop” does all the tests mentioned above, squaring x (raising power by 2) and testing until it reaches .

And, the usual loop to find primes -

fn primes_128bit() -> u128 {

loop {

let num = (rng::u64() | 0x8000000000000001u64) as u128;

if miller_rabin_test(num, 10) == PrimeResult::ProbablePrime {

return num;

}

}

}

➜ time cargo run --release

Prime found: 15333511742700010117

cargo run --release 0.03s user 0.01s system 99% cpu 0.042 total

It is as fast as Fermat’s test but what about the “probable prime” thing here? Miller-Rabin’s worst case error is bound to , but for large values of , the error on average is much smaller like . What’s the chance that a Miller-Rabin test with returns a composite?

➜ python3 -c "print(f'chance of error = {8 ** -10 :.15f}%')"

chance of error = 0.000000000931323%

That is good enough for me :). For context that probability is exactly the same as the probability of getting all heads in 30 consecutive coin tosses (). In real cryptographic use you have to be a bit more cautious though in how the random bases are picked and assume adversarial conditions.

Finally, we have a way to generate random numbers and we have a primality test that is fast and efficient enough to work on big numbers. The only things missing are the big numbers themselves, so let’s venture even deeper…

1024 bits, A bit of a detour?

At this point it is obvious that we cannot go further than 64 bits by just using rust’s built-in integer datatypes. What we need is a “bigint”, or an implementation of arbitrary precision arithmetic usually called “bigint” or “bignum” in most languages. The sensible thing to do would be to import a bigint crate for rust and be done, but I am the one who gave myself the constraint of no external dependencies so I am going to follow it.

I guess it’s time to build a BigInt

“Build a BigInt” is not the answer to “how to generate big primes?”, but there won’t be any big primes (or composites for that matter) without a BigInt behind them, so we are going to take a bit of a detour and figure out how BigInt works.

Attempt #1 - BigInt as digits

After a quick skim of the Wikipedia page for arbitrary precision arithmetic and a brief research session, I found out there are a few ways to go about this. The easiest method was to store all the digits of your big number in an array and so that’s what I tried for attempt #1.

At first it starts out really simple. The number is represented as just a list of digits, so implementing addition and multiplication is also easy and I copied the basic pen and paper methods that we learn in middle school into code. Once I reached division I realized that this is not going to be that simple, and after a few failed attempts I gave up. If you want a challenge, pause and try to think how you might implement the pen and paper long division algorithm in code.

Attempt #2 - BigInt as binary

After my failed attempt #1, I thought - why not store the numbers in binary? Or more specifically, why not store the number as a list of 0s and 1s? And so began my second attempt.

This was my very simple BigInt, just an array of bool values -

const N: usize = 2048;

struct BigInt {

bits: [bool; N]

}

The actual size is 2048 instead of 1024 because as we saw earlier multiplying two N-bit numbers needs at most 2N bits of space.

Next we need some arithmetic. I still remember a few fragments of my logic and microprocessor class from university so what I did was basically implement a Full Adder in code to handle addition and subtraction. This is what addition looked like -

fn bigint_add(own: &[bool], other: &[bool]) -> [bool; N] {

let mut bits = [false; N];

let mut carry = false;

for (i, (d1, d2)) in own.iter().zip(other.iter()).enumerate() {

bits[i] = d1 ^ d2 ^ carry;

carry = (d1 & d2) | (carry & (d1 ^ d2));

}

if carry { panic!("Attempt to add with overflow"); }

bits

}

impl Add for BigInt {

type Output = Self;

fn add(self, other: Self) -> Self {

Self { bits: bigint_add(&self.bits, &other.bits) }

}

}

impl AddAssign for BigInt {

fn add_assign(&mut self, other: Self) {

self.bits = bigint_add(&self.bits, &other.bits);

}

}

Note: Here I am using the Add and AddAssign traits from rust to override the + and += operators for my BigInt type and will do the same for all other operators too.

Next I implemented the shift left (<<) and shift right (>>) operators. These just shift the entire list of bits left or right by the given amount, throwing away any overflow.

fn bigint_shl(own: &[bool], amount: usize) -> [bool; N] {

let mut bits = [false; N];

if amount > N { return bits; }

let mut i = amount;

for bit in own.iter().take(N - amount) {

bits[i] = *bit;

i += 1;

}

bits

}

fn bigint_shr(own: &[bool], amount: usize) -> [bool; N] {

let mut bits = [false; N];

if amount > N { return bits; }

let mut i = 0;

for bit in own.iter().skip(amount) {

bits[i] = *bit;

i += 1;

}

bits

}

Onward to multiplication. A really good thing about working in binary is that multiplication becomes very easy. Binary can only be 0 or 1, so no matter how long a number is, the only two results of its multiplication with a bit can be either 0 or itself. This reduces the classic multiplication algorithm to a much simpler one called “shift-and-add” and now it can use the newly implemented “shift” and “add” -

fn bigint_mul(own: &[bool], other: &[bool]) -> [bool; N] {

let mut result = BigInt::zero();

let n1 = BigInt::from(own);

let mut current;

for (shift, d2) in other.iter().enumerate() {

if !(*d2) { continue; }

for i in (N - shift)..N {

if own[i] { panic!("Attempt to multiply with overflow"); }

}

current = n1 << shift;

result += current;

}

result.bits

}

Finally we arrive at division, and the reason why I decided to go with binary, because binary long division also simplifies the problem quite a lot. Unlike long division with 0-9 digits, the digits of the quotient can only be 1 or 0 in binary which means the intermediate subtractions use either the divisor or 0. This is similar to the “shift-and-add” algorithm above and can be implemented with just “shift” and “sub” -

fn bigint_div(own_bits: &[bool], other_bits: &[bool]) -> ([bool; N], [bool; N]) {

let mut quotient = BigInt::zero();

let mut dividend = BigInt::from(own_bits);

let mut remainder = BigInt::zero();

let divisor = BigInt::from(other_bits);

if divisor == BigInt::zero() {

panic!("Attempt to divide by zero");

}

let mut no_of_bits = N;

while !dividend.bits[N - 1] {

dividend <<= 1;

no_of_bits -= 1;

}

for i in 0..no_of_bits {

remainder <<= 1;

remainder.bits[0] = dividend.bits[N - 1 - i];

quotient <<= 1;

if remainder >= divisor {

remainder -= divisor;

quotient.bits[0] = true;

}

}

(quotient.bits, remainder.bits)

}

After doing some testing to confirm the arithmetic works as expected I switched over all u128s in miller_rabin_test() and mod_exp() over to my BigInt, changed the RNG to fill 1024 bits, and ran the code. It didn’t finish in a few minutes and it was getting late at night, so I left it running and went to sleep. The next day I woke up to this -

➜ time cargo run --release

Prime found: 1100001101000111101111110011001111000010011111010110100010101011111110100010001100001101110101100100100110011110101100001000110010100011100101001010100110010110001101110111001110100110000001010111100000110111100010010010100110101011101010100101000100111110000001100000000011101111010100100101001111111100010010000110101010101000101010000011011110111101100011000010111011010000110101100111001101011000000001000100011001011100100000011110011011000000101000010001010001010010001101111100110001000011100110111100000010100000011101011100101101110100010111110000110000010111110000110101001110100100110101011101100111000100010110101001101110100111100010000000110000001001011100100100101001110100101100110110001001101110010100011011011110111100010011111011101010100010111010110101101010011110010111100100110111010111100101111111110010100101010111100111010001010101011100001000100101001111110101100101011100100001101111000100000001111100001001001010100101101111010000101001111111010110111111011101010100111011100111100001010101011101

cargo run --release 1959.67s user 0.09s system 99% cpu 32:44.90 total

Or expressed in base-10 -

137130462909417371581865483489043797725909059024661411704723085022816692663284008207826785132470756353352621332808019668785759110990576815741502628035997147255459016128105305451010585699069674494217365521467940783164171729442866016775055913991624626502191730619275815532321664270492537447637102633611801007453

This is the first 1024-bit prime number I found! I have solved the challenge!

But there was just one small issue - the runtime counter showed that it took ~30mins to find that prime. Although technically I have solved the challenge and also understood how to do it, taking 30 minutes per prime is not what I would call “reasonable time” especially when OpenSSL takes 30ms to do the same thing! The “reasonable time” limitation was part of the constraints because I want to learn both the skill of making it work and making it efficient :).

Attempt #3 - BigInt as bytes

The binary implementation I came up with in attempt #2 was immensely valuable. Not only did it give me my first 1024-bit prime, it was also an implementation that was proven to work correctly and I can test any further changes against it. This helped a lot with speeding up my experimentation and gave me the confidence to try some of the more difficult things.

When I started looking into why binary was so slow, the first thing I found was that in an array of bool, each bool would occupy a byte in memory and not a single bit as I thought it might. This stackoverflow answer has the reasons why. This meant my bool array of size 2048 was not using 2048 bits of memory as I had assumed, but 2048 bytes! That’s 2kb of memory just to store a single number. My binary implementation was probably spending almost all of its time waiting to read or write numbers from RAM due to L1 cache misses. I did not know at the time how to actually test this, but I thought let’s try a more memory efficient version anyway and see if it improves things.

The natural path to follow would then be, why not store the bits as byte sized chunks instead of individual bits in a list. It could store all 2048 bits in an array of 256 bytes. Surprisingly, addition/subtraction and multiplication worked with this new format without any major changes to the algorithms. Instead of adding bit by bit and using an extra bit as carry, it would now add byte by byte using an extra byte as carry. I switched multiplication from “shift-and-add” back to the pen and paper algorithm I initially had for digits, but using bytes in place of 0-9 digits, and it worked without any modifications too. For division I added a few extra lines of code to treat the list of byte chunks as a single list of bits and the rest remained unchanged.

With all these improvements, I got my second 1024-bit prime at 4min 43sec. Nice improvement over the original binary, but still not enough.

Attempt #4 - BigInt as u64 chunks

While doing more research on arbitrary precision arithmetic trying to find other ways to optimize I made an interesting discovery. There is a reason why my arithmetic algorithms in attempt #3 worked directly with bytes instead of bits. What I had unknowingly implemented was a digit based BigInt similar to what I tried in attempt #1, but using “high radix” digits. Attempt #1 used base-10 digits, 0 to 9, what us humans are comfortable with, but a computer can work in any base you want it to use. You’ve probably heard of base-16 (or hexadecimal) where the digits are 0-9 and A-F, or even base-64 which consists of all alphanumeric characters as the digits. My code from attempt #3 was effectively using base-255, with each byte acting as a single “digit”.

For example, here’s the same number expressed in different bases -

base-10: 3,095,627 (7 digits)

base-16: 2F3C4B (6 digits)

base-64: LzxL (4 digits)

base-255: 00101111 00111100 01001011 (3 digits)

(If you are wondering how I got the base-255 version, it is literally the binary representation of 3,095,627 split into 3 bytes.)

The funny thing is, I had read about this multiple times and dismissed it each time as being “too complex a concept for me to understand”, but after implementing it accidentally it finally clicked in my mind. Once I understood it the next logical thing to realize was that there’s no reason for it to be limited to byte sized digits and I can push it as far as it would go.

So here’s what my latest BigInt looks like -

const N: usize = 2048 / 64;

struct BigInt {

chunks: [u64; N]

}

It uses a array of 32 u64 chunks to store upto 2048 bits. As usual it goes to twice the size we need so it has enough room to store the multiplication result of 2 BigInts. Similarly, the highest it can go for each individual “digit” is u64 as it would need to use a u128 to store the multiplication result of two individual “digits”. The rest of the code remained mostly unchanged from attempt #3 with a few small changes, like changing the carry variable to u64 instead of a byte. At this point, the BigInt is using base-(264-1) or base-18446744073709551615 (🤯) and it only needs 16 “digits” to represent a number that uses 309 digits in base-10!

This now takes roughly 60-90 seconds to generate 1024-bit primes, which is a vast improvement over binary but still not fast enough.

Attempt #5 - BigInt as u64 chunks, but better

At this point I decided to run some simple benchmarks to try to find out what was slowing us down. Here’s the results:

| binary | u64 chunks | |

|---|---|---|

| a + b and a - b | 5537.35ns | 123.57ns |

| a * b | 1292283.14ns | 842.32ns |

| a / b and a % b | 733446.76ns | 44440.12ns |

| a << b and a >> b | 276.85ns | 140.88ns |

| a < b and a > b | 2506.02ns | 58.91ns |

(All times average of 1000 runs measured in nanoseconds)

This shows the significant progress made since binary, and rest of attempt #5 is going to be a list of things I did to make it even faster.

Division

The biggest thing that jumps out from the benchmarks is: division. Even though everything else has improved a lot, division is still using the same algorithm that it used in binary, still doing long division a single bit at a time. I have always seen people complain about division being slow, now I know why. Division really is a harder problem to solve compared to addition or multiplication.

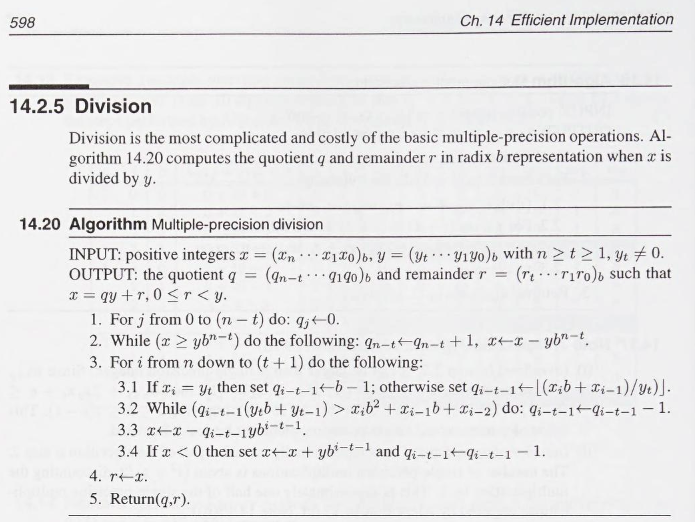

While looking for better algorithms, I saw multiple articles and sources pointing to a book — Handbook of Applied Cryptography. I found it on the Internet Archive, made an account, and borrowed it for 14 days. This is what I found on page 598:

Page 598, Handbook of Applied Cryptography

On the one hand it’s talking about “radix b representation” and by now I had done enough to understand that it was referencing the same “high radix digits” concept I had discovered earlier. On the other hand I understood nothing else at all and the book doesn’t make it any easier as it didn’t include any text explaining this algorithm. After staring at this page for 3 days straight and numerous failed attempts, I managed to write a working implementation. What I understood is that it is doing long division on base-N numbers, using the first 3 “digits” of the dividend and the first 2 “digits” of the divisor to estimate the current quotient “digit” in a loop until it finds the correct value, but I am not comfortable enough with the math behind it yet to explain it here. I have also heard that a very similar algorithm appears in The Art of Computer Programming, but there was no easy way to quickly refer it other than buying a copy, which I would get around to doing eventually.

Spending the effort to figure this out did pay off though, it saves about 40,000ns (40μs) per division, and there are quite a lot of division and modulus operations that happen for a single run of Miller-Rabin and 1000s of Miller-Rabin runs before a prime is found. An additional optimization I did was to check if the divisor is a single “digit” (a single u64 chunk) and then directly do long division using u128 to catch any overflows. This skips the costly algorithm entirely and is another one of those cases that frequently appear in Miller-Rabin.

fn bigint_div(mut dividend: BigInt, mut divisor: BigInt) -> (BigInt, BigInt) {

if divisor.is_zero() { panic!("Attempt to divide by zero"); }

if dividend < divisor { return (BigInt::zero(), dividend) }

// x = dividend

// y = divisor

// b = 64 (size of a "digit")

let mut quotient = BigInt::zero();

let mut lambda = 0;

let t = divisor.size();

if divisor.chunks[t] < u64::MAX / 2 {

while divisor.chunks[t] << lambda < u64::MAX / 2 {

lambda += 1;

}

divisor <<= lambda;

dividend <<= lambda;

}

let n = dividend.size();

// if y has only 1 "digit", then do long division directly

if t == 0 {

let divisor_digit = divisor.chunks[0] as u128;

let mut remainder = 0;

let mut current = 0;

for (i, chunk) in dividend.chunks.iter().enumerate().rev().skip(N - n - 1) {

current = (remainder << 64) + *chunk as u128;

quotient.chunks[i] = (current / divisor_digit) as u64;

remainder = current % divisor_digit;

}

return (quotient, BigInt::from(remainder >> lambda));

}

// step 2, align and then subtract y from x until x >= aligned

let mut aligned = divisor.clone();

for _ in 0..(n - t) {

aligned <<= 64;

}

while dividend >= aligned {

quotient.chunks[n - t] += 1;

dividend -= aligned;

}

let one = BigInt::from(1);

let mut x_3digit;

let mut y_2digit;

let mut q_u128;

let mut q_digit;

// step 3

for i in ((t + 1)..=n).rev() {

q_digit = BigInt::zero();

// step 3.1

if dividend.chunks[i] == divisor.chunks[t] {

q_digit.chunks[0] = u64::MAX - 1;

} else {

q_u128 = (dividend.chunks[i] as u128) << 64;

q_u128 += dividend.chunks[i - 1] as u128;

q_digit = BigInt::from(q_u128 / divisor.chunks[t] as u128);

}

// precalc 3digit x and 2digit y

x_3digit = BigInt::zero();

x_3digit.chunks[2] = dividend.chunks[i];

x_3digit.chunks[1] = dividend.chunks[i - 1];

x_3digit.chunks[0] = dividend.chunks[i - 2];

y_2digit = BigInt::zero();

y_2digit.chunks[1] = divisor.chunks[t];

y_2digit.chunks[0] = divisor.chunks[t - 1];

// step 3.2

while q_digit * y_2digit > x_3digit {

q_digit -= one;

}

// move quotient "digit" from temp bigint to its place in quotient

quotient.chunks[i - t - 1] = q_digit.chunks[0];

// precalc shifted y

let mut y_shifted = divisor.clone();

for _ in 0..(i - t - 1) {

y_shifted <<= 64;

}

// step 3.3 and 3.4

if dividend >= q_digit * y_shifted {

dividend -= q_digit * y_shifted;

} else {

dividend += y_shifted;

dividend -= q_digit * y_shifted;

quotient.chunks[i - t - 1] -= 1;

}

}

// rewind shifts by lambda to get actual remainder

dividend >>= lambda;

(quotient, dividend)

}

Multiplication

Next I looked at multiplication, the second biggest thing in the benchmarks. I had implemented essentially the same algorithm as the book, so no gains there. Rearranging the loop calculations cleverly to eliminate an extra BigInt I was using to store the intermediate results gave a 2x improvement to runtime.

Since I had already added a function to calculate the size (number of occupied chunks) for division, I used the same function here to only run the loops for non-zero chunks and also added extra checks to skip loop iteration if one of the chunks is zero. Although this adds complexity and branching inside the loops, it still helps improve performance as most of the time BigInt is supposed to store 1024 bits or less and thus be half empty. This gave another 2x improvement.

I could have gone to the Karatsuba algorithm or even fast Fourier transforms (FFT) which theoretically would give even better performance, but actually implementing it for a BigInt that I am building myself was too complex and my current multiplication was now fast enough that I did not pursue this path.

fn bigint_mul(own: BigInt, other: BigInt) -> BigInt {

let mut result = BigInt::zero();

let mut intermediate;

let mut carry;

let t = own.size();

let n = other.size();

if t + n + 1 >= N { panic!("Attempt to multiply with overflow"); }

for (j, chunk2) in other.chunks.iter().take(n + 1).enumerate() {

if *chunk2 == 0 { continue; }

carry = 0;

for (i, chunk1) in own.chunks.iter().take(t + 1).enumerate() {

if *chunk1 == 0 && carry == 0 { continue; }

intermediate = ((*chunk1 as u128) * (*chunk2 as u128)) + carry;

intermediate += result.chunks[i + j] as u128;

result.chunks[i + j] = intermediate as u64;

carry = intermediate >> 64;

}

result.chunks[t + j + 1] += carry as u64;

}

result

}

Addition and Subtraction

These are already super fast, I was surprised to see even my custom implementation already runs relatively close to the time it takes rust to add two native u128. I tried a few things but (as expected) I was not as clever as the compiler and whatever magic it does under the hood.

fn bigint_add(own: BigInt, other: BigInt) -> BigInt {

let mut sum;

let mut carry = 0;

let mut sum_overflow;

let mut carry_overflow;

let mut result = BigInt::zero();

let own_iter = own.chunks.iter();

let other_iter = other.chunks.iter();

for (i, (chunk1, chunk2)) in own_iter.zip(other_iter).enumerate() {

(sum, sum_overflow) = chunk1.overflowing_add(*chunk2);

(sum, carry_overflow) = sum.overflowing_add(carry);

result.chunks[i] = sum;

carry = sum_overflow as u64 + carry_overflow as u64;

}

if carry != 0 { panic!("Attempt to add with overflow"); }

result

}

Miller-Rabin

There were a bunch of optimizations that I found I could do in my implementation of Miller-Rabin. Here’s what it looked like initially -

fn miller_rabin_test(n: BigInt, k: usize) -> PrimeResult {

let zero = BigInt::zero();

let one = BigInt::from(1);

let two = BigInt::from(2);

let mut s = zero;

let mut d = n - one;

while d % two == zero {

d = d / two;

s += one;

}

'main_loop: for _ in 0..k {

let base = <<rng omitted>>;

let mut x = mod_exp(base, d, n);

if x == one || x == n - one { continue 'main_loop; }

while s > zero {

x = mod_exp(x, two, n);

if x == n - one { continue 'main_loop; }

s -= one;

}

return PrimeResult::Composite;

}

PrimeResult::ProbablePrime

}

And here some of the major optimizations I did:

- The

mod_exp()call on line 19 is no longer required as BigInt has enough memory to dox = (x * x) % ndirectly. - On line 15

mod_exp()was replaced by an simplified inline version which saved a lot of function call overhead. - The BigInt representation of “2” on line 4 was created just to do the even check on line 8. I wrote a simple

num.is_even()function that only needs to check if the last bit is0or1, and so removed a bunch of extra costly divisions and an extra BigInt allocation. - Similarly, the division on line 9 can be replaced with a

d >>= 1shift operation. In this case replacing another bunch of costly divisions with shifts is actually very beneficial compared to native code where this change is usually not worth it. - There are a lot of

+= oneand-= one(whereoneis BigInt representation of “1”), I added specialnum.increase()andnum.decrease()which for almost all cases would just do a u64 addition/subtraction on the last “digit”, and only go to the full BigInt addition/subtraction if the last “digit” was either0oru64::max, meaning the rare cases where it actually needs the BigInt to handle the overflow from adding or subtracting 1.

All of these and other changes not listed above individually account for just microseconds or even nanoseconds of advantage, but when they are run multiple times inside a loop inside thousands of Miller-Rabin tests it all adds up to a nice improvement in runtime. At least that is what I thought before I benchmarked them, and is_even() plus d >>=1 easily outclass everything else give a whopping 70,000ns advantage each! Here’s the final improved Miller-Rabin -

fn miller_rabin_test(n: BigInt, k: usize) -> PrimeResult {

let zero = BigInt::zero();

let one = BigInt::from(1);

let n_minus_1 = n.decrease();

let mut d = n_minus_1;

let mut s = zero;

while d.is_even() {

d >>= 1;

s = s.increase();

}

let mut bytes = [0; (1024 / 16)];

let mut x;

let mut base;

'main_loop: for _ in 0..k {

rng::insert_random_bytes(&mut bytes).unwrap();

base = BigInt::from(bytes.as_slice());

x = one;

while !d.is_zero() {

if !d.is_even() { x = (x * base) % n; }

d = d >> 1;

base = (base * base) % n;

}

if x == one || x == n_minus_1 { continue 'main_loop; }

while !s.is_zero() {

x = (x * x) % n;

if x == n_minus_1 { continue 'main_loop; }

s = s.decrease();

}

return PrimeResult::Composite;

}

PrimeResult::ProbablePrime

}

Primality testing logic

I modified the logic for testing primes with changes inspired by step 2 (64-bit primes) to add an additional trial division check at the start, using a precomputed list of the first 5000 small primes. This was infeasible for the majority of the time I was working on BigInt as trial division uses a lot of divisions, which were extremely slow. The trick to make it work is that all first 5000 small primes are small enough that they fit inside a single “digit” (a single u64 chunk). This means all divisions inside trial division would fall into the special case I just added, where it can perform the entire division using long division and u128 and skip the costly BigInt division algorithm. The same trial division function can also be used to generate the initial list of the first 5000 small primes. Optimizing trial division at step 2 did have some use after all!

Another change to the logic is that instead of reading /dev/urandom for each iteration of the loop and generating a new random number to test, it just adds 2 to the first random number to get the next candidate. Since the last bit is modified to be 1 we know it’s an odd number, which means adding 2 would take it to the next odd number. This can be further optimized by adding a dedicated function num.increase_by_2() which like num.increase() will only do the full BigInt addition for the overflow case, and otherwise would just do a u64 addition.

And finally, this is one of those problems that can be called “embarrassingly parallel” because there is no shared memory and no need to have any synchronization between threads. Instead of asking one CPU thread to find primes why not ask all 16 CPU threads and the fastest one wins!

- - -

Here are the same benchmarks after these optimizations:

| binary | u64 chunks | u64 chunks but better | |

|---|---|---|---|

| a + b and a - b | 5537.35ns | 123.57ns | 123.62ns |

| a * b | 1292283.14ns | 842.32ns | 295.04ns |

| a / b and a % b | 733446.76ns | 44440.12ns | 831.77ns |

| a << b and a >> b | 276.85ns | 140.88ns | 126.04ns |

| a < b and a > b | 2506.02ns | 58.91ns | 58.50ns |

| a / 2 (or a << 1) | 2638289.48ns | 75121.58ns | 60.89ns |

| a % 2 == 0 (or a.is_even()) | 2447553.14ns | 78400.87ns | 21.65ns |

| a - 1 (or a.decrease()) | 6179.48ns | 103.15ns | 67.54ns |

(All times average of 1000 runs measured in nanoseconds)

1024 bits, quite a bit faster!

Finally, we arrive at the conclusion to this very long article. Let’s combine everything together into a function to generate 1024-bit primes -

fn primes_1024bit() -> BigInt {

const P: usize = 1000;

let primes = utils::generate_small_primes::<P>();

let zero = BigInt::zero();

let mut small_prime = BigInt::zero();

let mut bytes = [0u8; 1024 / 8];

rng::insert_random_bytes(&mut bytes).expect("Cannot access /dev/urandom");

let mut num = BigInt::from(bytes.as_slice())

num.chunks[(1024 / 64) - 1] |= 0x8000000000000000u64;

num.chunks[0] |= 1;

'prime_loop: loop {

num = num.increase_by_2();

for i in 0..P {

small_prime.chunks[0] = primes[i];

if num % small_prime == zero {

continue 'prime_loop;

}

}

if miller_rabin_test(num, 10) == PrimeResult::ProbablePrime {

return num;

}

}

}

Call the above function in parallel threads -

fn run() {

let (tx, rx) = std::sync::mpsc::channel();

for _ in 0..16 {

let thread_tx = tx.clone();

std::thread::spawn(move || {

thread_tx.send(primes_1024bit()).unwrap();

});

}

let prime = rx.recv().unwrap();

prime.print_decimal();

}

And here are the results! -

➜ time cargo run --release

133639768604208228777408136159783586754136713880762782100572086187859339703910900715674773943439684405153138260262492990850200027881950953138966616704637409705491165541761840874200485820151419486204300434469857557841532664407934654743999891926036532834796558113864177048787433702650711105375897281079281724197

cargo run --release 0.58s user 0.01s system 690% cpu 0.086 total

I can finally say - challenge solved!

It takes on average 40ms to find a 1024-bit prime. Individual calls to prime_1024bit() can range from as low as ~8ms to high ~800ms due to randomness, but parallel execution and picking the fastest one smoothens that out. Here’s the average runtime from 100 runs -

➜ perf stat -r100 ./target/release/primes

--- outputs omitted ---

Performance counter stats for './target/release/primes' (100 runs):

--- other stats omitted ---

0.04109 +- 0.00307 seconds time elapsed ( +- 7.48% )

In the end, I am really happy that I gave myself this challenge. It forced me out of my comfort zone and pushed me to learn so many new things, both about programming and how to tackle new topics and do research. There are still tons of things and ideas left on my todo list for improvements I can make and things I could have done better, but I have to save at least some energy for my next project :D.

Discussion on - hackernews, reddit

The full code and repository can be found here - github.

It goes without saying that probably none of this is actually cryptographically secure, but that was never the point anyway.

Thanks for reading!

Tried to find big primes

Multiply overflowed

Had to build BigInt